Maybe you didn't know. I sure didn't.

ChatGPT makes up data that it doesn't have. I'll admit that I've laughed about its obvious hallucinations, but then it hit me. What about the ones that aren't obvious?

It can be hard to see the errors when they're mixed in with some pretty fantastic advice. And it's so good at finding solutions for you. You've grown used to incredible results, so why wouldn't you take those answers at face value?

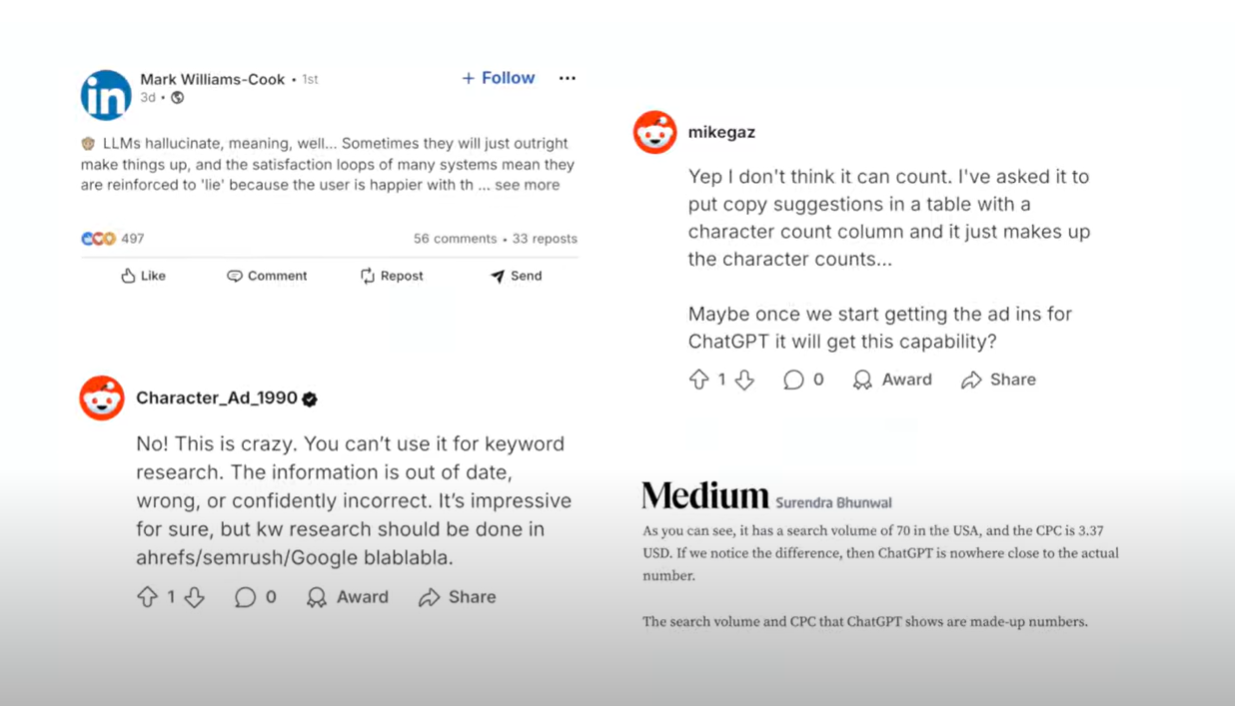

Hallucinations are funny until they aren't.

This is beyond the wrong number of Rs in "strawberry." If I ask about comparing two SEO terms to target, ChatGPT will invent the search volume for each one and pass it off without any sign that something might be off.

Check out the video above. All of these commenters already learned that lesson at some point. They might have learned the hard way. Wrong data can have you prioritizing the wrong projects. It can affect your strategy and flat out take you down the wrong path.

So now I know. ChatGPT makes up data it doesn't have, so we're giving it some of ours.

We're not suggesting you give up the ChatGPT you've come to love, just make it more reliable. And soon, we'll show you how you're giving it superpowers.

Get SpyFu data in ChatGPT.